personality-tests

Can AI Assess Your Personality? The Science

Explore what science reveals about AI-driven personality assessment. Compare LLM accuracy to human judges, review risks, and learn practical safeguards.

Quick answer

Can AI accurately assess your personality?

Yes — with caveats. Large language models can infer Big Five traits from everyday speech and writing at accuracy levels comparable to close acquaintances. However, results vary by model size, input type, and population. Clinician oversight and ethical safeguards remain essential.

Executive Summary

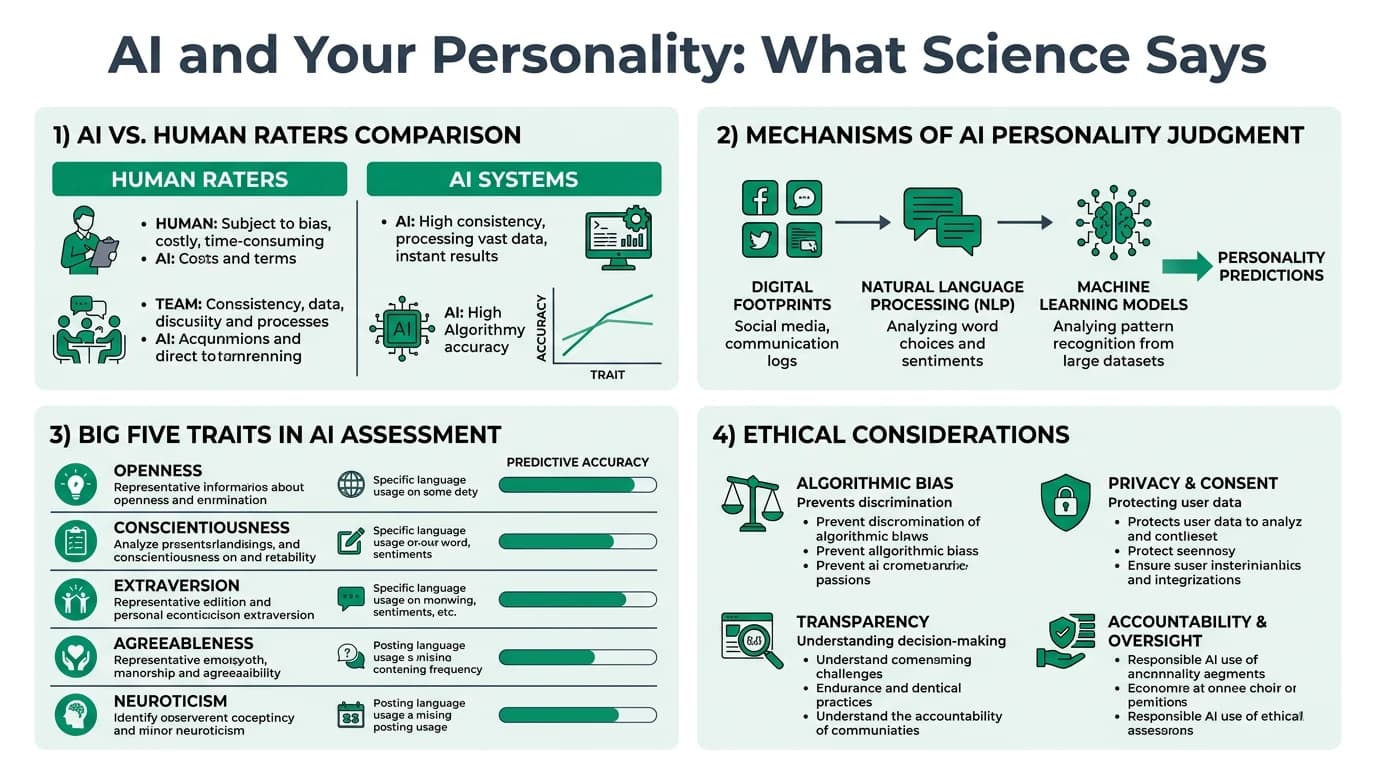

AI-driven personality assessment has moved from speculative to operational. A growing body of peer-reviewed research shows that large language models (LLMs) can rate Big Five traits from naturalistic text with accuracy matching or exceeding ratings by friends and family members 1.

This does not mean AI should replace validated questionnaires. It means a new class of tools is emerging — language-based, passive, and scalable — that complements traditional self-report instruments.

Key takeaway: AI personality inference works best as a screening layer or research tool. For high-stakes decisions (hiring, clinical diagnosis), it requires human oversight, validated instruments, and transparent methodology.

Important: AI-generated personality scores are probabilistic estimates, not clinical diagnoses. They should never be used as the sole basis for employment, clinical, or legal decisions.

How AI Infers Personality from Language

Modern personality inference relies on LLMs processing naturalistic language samples — diary entries, social media posts, interview transcripts, or spontaneous narratives.

- Feature extraction: the model identifies linguistic patterns (word choice, syntax complexity, emotional tone) correlated with trait dimensions.

- Zero-shot inference: newer LLMs can rate personality without trait-specific fine-tuning by leveraging their general language understanding 1.

- Prompt-based scoring: the model receives a text sample and a structured prompt asking it to rate the author on each Big Five dimension.

| Input type | Accuracy level | Best suited for | Limitations |

|---|---|---|---|

| Personal diary entries | High | Research, longitudinal tracking | Requires participant consent and rich text |

| Social media posts | Moderate-to-high | Large-scale screening | Platform-specific language norms may bias results |

| Interview transcripts | High | Hiring augmentation | Structured prompts improve consistency |

| Spontaneous narratives | High | Clinical and coaching | Requires sufficient text length (300+ words) |

| Short text messages | Low-to-moderate | Exploratory only | Insufficient linguistic signal |

A 2025 study at the University of Michigan found that GPT-4-class models rating video diary transcripts achieved correlations of 0.30–0.45 with self-report Big Five scores — comparable to ratings from close acquaintances 1.

For how personality manifests in online behavior, see Personality and Social Media Behavior.

Accuracy Benchmarks: AI vs Human Judges

The critical question is not whether AI is perfect, but whether it matches or improves upon existing human judgment baselines.

| Judge type | Typical correlation with self-report (Big Five) | Strengths | Weaknesses |

|---|---|---|---|

| Self-report questionnaire | 1.00 (reference) | Validated, standardized | Social desirability bias, limited self-insight |

| Close friend or spouse | 0.30–0.50 | Behavioral observation over time | Halo effects, relationship bias |

| Stranger (thin-slice) | 0.10–0.25 | Unbiased by relationship | Limited information |

| LLM (GPT-4 class) | 0.30–0.45 | Scalable, consistent, no fatigue | Depends on text quality and quantity |

| LLM (smaller models) | 0.15–0.30 | Low-cost | Lower reliability, less consistent |

| Traditional NLP (LIWC-based) | 0.10–0.25 | Transparent features | Limited to word-count heuristics |

Key findings from recent research:

- LLM accuracy scales with model size: larger models produce more reliable and valid trait estimates 2.

- Trait-specific accuracy varies: Extraversion and Conscientiousness are easier to infer from text than Neuroticism or Agreeableness 1.

- Zero-shot LLM inference already outperforms older dictionary-based NLP methods (such as LIWC) by a significant margin 1.

Which Traits Are Easiest to Detect?

Not all Big Five dimensions are equally visible in language. Detectability depends on how directly a trait influences word choice and communication style.

| Big Five trait | AI detection accuracy | Why | Observable language markers |

|---|---|---|---|

| Extraversion | High | Strong lexical signal (social words, positive emotion) | Frequent social references, exclamation marks, group activities |

| Conscientiousness | High | Organized language, future planning references | Goal-oriented vocabulary, structured sentences |

| Openness | Moderate-to-high | Creative vocabulary and abstract concepts | Unusual word choices, philosophical references |

| Agreeableness | Moderate | Prosocial language overlaps with politeness norms | Hedging words, compliments, collaborative framing |

| Neuroticism | Moderate | Anxiety and negative emotion words | Negative emotion terms, uncertainty markers, self-focused language |

Practitioners should weight AI-inferred scores differently depending on which trait is being assessed. Extraversion estimates carry more confidence than Neuroticism estimates from the same text sample 1.

LLM Personality: Do AI Models Have Traits?

A separate but related question is whether LLMs themselves exhibit stable personality-like patterns when completing personality questionnaires.

- Researchers at Cambridge and Google DeepMind developed the first validated psychometric framework for testing LLM "personality" 2.

- Large instruction-tuned models (GPT-4, Claude 3) show moderate internal consistency on Big Five items — meaning their response patterns resemble a coherent personality profile.

- Smaller or base models show low consistency and high sensitivity to prompt wording.

| Model category | Big Five internal consistency | Response stability across prompts | Practical implication |

|---|---|---|---|

| Large instruction-tuned (GPT-4, Claude) | Moderate (alpha 0.60–0.75) | Moderate-to-high | Can simulate consistent personas |

| Mid-size instruction-tuned | Low-to-moderate (alpha 0.45–0.60) | Variable | Unreliable for persona simulation |

| Base models (no RLHF) | Low (alpha below 0.45) | Low | Not suitable for personality tasks |

This matters because AI systems used for personality assessment must themselves be consistent. An unreliable "judge" cannot produce reliable "judgments" 2.

For broader assessment quality context, see Personality Test Reliability.

Applications in Practice

AI personality inference is already being used — and misused — across several domains.

| Application | Current maturity | Evidence strength | Key risk |

|---|---|---|---|

| Research data collection | Operational | Strong | Consent and privacy protocols needed |

| Pre-screening for hiring | Emerging | Moderate | Bias, lack of transparency, legal liability |

| Clinical augmentation | Experimental | Growing | Must not replace clinical judgment |

| Coaching and development | Emerging | Moderate | Framing as insight, not diagnosis |

| Social media profiling | Operational (commercial) | Variable | Consent violations, surveillance risk |

| Fraud detection | Experimental | Limited | High false positive risk |

Responsible Use Principles

- Transparency: disclose when AI is used to assess personality.

- Consent: obtain informed consent for language-based profiling.

- Validation: use AI scores alongside — not instead of — validated instruments.

- Oversight: maintain clinician or psychologist review for high-stakes decisions.

- Bias auditing: regularly test AI outputs for demographic bias.

For hiring-specific validation guidance, see Personality Test Validity in Hiring.

Ethical Risks and Safeguards

The power of AI personality inference creates proportional ethical risks that practitioners must manage proactively.

| Risk category | Description | Mitigation strategy |

|---|---|---|

| Consent violation | Inferring personality without explicit permission | Mandatory opt-in with clear disclosure |

| Demographic bias | Models may rate traits differently across gender, age, or cultural groups | Regular bias audits across protected categories |

| Personality manipulation | AI-inferred profiles could be used for targeted persuasion | Restrict access to raw trait scores; enforce data minimization |

| Self-concept distortion | Receiving AI-generated personality feedback may alter self-perception | Frame results as hypotheses, not facts |

| Over-reliance | Treating AI scores as ground truth rather than probabilistic estimates | Require human-in-the-loop for all consequential decisions |

| Data security | Linguistic data used for inference is highly personal | Encrypt, anonymize, and delete after analysis |

Research shows that extended AI interaction can shift users' self-concept toward the AI's expressed personality profile — a subtle but real homogenization risk 3.

Limitations of Current AI Approaches

AI personality inference is promising but far from mature. Practitioners should understand its boundaries.

- Text length dependency: accuracy drops significantly for samples under 300 words. Short texts produce noisy estimates.

- Context sensitivity: language style shifts across contexts (formal email vs. casual chat). A single context sample may not represent the whole person.

- Cultural and linguistic bias: most training data is English-dominant. Cross-cultural validity is uncertain.

- Temporal instability: a person's language today may not reflect their stable trait profile. Multiple samples over time improve reliability.

- Explainability gap: LLMs provide scores but not transparent reasoning. Users cannot easily audit why a particular rating was assigned.

| Limitation | Impact on practice | Workaround |

|---|---|---|

| Short text samples | Low reliability | Require minimum 300-word samples |

| Single context | Biased estimate | Collect language from multiple settings |

| English-dominant training | Cross-cultural inaccuracy | Validate with local norm groups |

| One-time snapshot | Temporal noise | Aggregate multiple samples over weeks |

| Black-box scoring | Low trust and auditability | Use explainable AI methods where available |

Future Directions

The field is moving rapidly. Several trends will shape AI personality assessment over the next three to five years.

- Multimodal inference: combining text, voice, and facial expression data for more robust trait estimation.

- Clinician-AI collaboration: tools that provide AI-generated hypotheses for psychologists to review and refine.

- Personalized assessment: AI adapting questionnaire items in real time based on initial responses.

- Regulatory frameworks: EU AI Act and similar legislation will likely classify personality inference as high-risk AI.

- Longitudinal tracking: AI monitoring personality change over time from ongoing digital interactions.

Readiness checklist for AI personality tools

- Verify the tool has published validation data (correlation with established instruments).

- Confirm informed consent protocols are in place for all assessed individuals.

- Check for demographic bias audits across gender, age, ethnicity, and language.

- Ensure a qualified human reviewer is involved in all consequential decisions.

- Establish data retention and deletion policies for linguistic samples.

- Review legal compliance with local AI and employment regulations.

For practical guidance on debriefing assessment results, see Personality Test Debriefing Best Practices.

FAQ

How accurate is AI at assessing Big Five personality traits?

Large language models (GPT-4 class) achieve correlations of 0.30–0.45 with self-report Big Five scores when analyzing sufficient text. This matches or exceeds ratings from close acquaintances but falls short of validated self-report questionnaires 1.

Can AI personality assessment replace traditional questionnaires?

Not yet. AI inference is best used as a complementary tool — for screening, research, or enriching traditional assessments. It lacks the standardization, norm groups, and legal defensibility of validated instruments 2.

What are the biggest ethical risks of AI personality inference?

The main risks are consent violations (profiling without permission), demographic bias (differential accuracy across groups), personality manipulation (using profiles for persuasion), and over-reliance (treating probabilistic scores as definitive) 3.

Do larger AI models produce better personality assessments?

Yes. Research consistently shows that model size correlates with assessment reliability and validity. Instruction-tuned models with more parameters produce more stable and accurate trait estimates 2.

How much text does AI need to assess personality?

A minimum of 300 words is typically needed for reasonable accuracy. Accuracy improves with longer samples (1,000+ words) and when text comes from multiple contexts rather than a single source 1.

Is AI personality assessment legal for hiring?

It depends on jurisdiction. The EU AI Act classifies employment-related AI as high-risk, requiring transparency and human oversight. In the US, EEOC guidance applies anti-discrimination standards. Organizations should consult legal counsel before deployment 4.

Can AI detect personality changes over time?

In principle, yes. By analyzing language samples collected at different time points, AI can track trait-level shifts. However, distinguishing genuine personality change from contextual language variation requires multiple data points and careful methodology 1.

What is the difference between AI assessing personality and AI having personality?

AI assessing personality uses language analysis to estimate human traits. AI "having" personality refers to the stable response patterns LLMs exhibit on personality questionnaires — a property of the model's training, not genuine psychological experience 2.

Notes

Primary Sources

| Source | Type | URL |

|---|---|---|

| Vize et al. (2025), Nature Human Behaviour | Peer-reviewed study on LLM personality inference | doi.org/10.1038/s41562-024-02077-2 |

| Cambridge / DeepMind (2024) | Psychometric framework for LLMs | cam.ac.uk |

| Serapio-Garcia et al. (2024), arXiv | LLM personality traits research | arxiv.org/abs/2307.00184 |

| PAR Inc. (2025) | Assessment industry trends report | parinc.com |

Conclusion

AI can assess personality from language with meaningful accuracy — comparable to close acquaintances and far better than strangers or older NLP tools. But "can" does not mean "should without guardrails."

The responsible path forward treats AI personality inference as a powerful augmentation layer: useful for research, screening, and hypothesis generation, but always subordinate to validated instruments, qualified practitioners, and informed consent.

Footnotes

-

Vize, C. E., Ringwald, W. R., Grunberg, V. A., Allen, T. A., & Wright, A. G. C. (2025). AI can reveal your personality from everyday speech and writing. Nature Human Behaviour. https://doi.org/10.1038/s41562-024-02077-2 ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9

-

Huang, J., Lam, M. H., Li, E., et al. (2024). Psychometric evaluation of large language models. University of Cambridge / Google DeepMind. https://neuroscience.cam.ac.uk/researchers-develop-the-first-scientifically-validated-psychometric-framework-for-large-language-models/ ↩ ↩2 ↩3 ↩4 ↩5 ↩6

-

Serapio-Garcia, G., Safdari, M., Crepy, C., et al. (2024). Personality traits in large language models. arXiv preprint. https://arxiv.org/abs/2307.00184 ↩ ↩2

-

PAR, Inc. (2025). Emerging trends in psychological assessment for 2026. PAR Learning Center. https://www.parinc.com/learning-center/par-blog/detail/blog/2025/10/28/emerging-trends-in-psychological-assessment-for-2026 ↩